Blog

Backgammon AI

H. Altan Birler

A friend challenged me to write a simple backgammon game.

There definitely had to be an AI you had to play against, that's for sure. However, I was terrible at playing backgammon. There was no way I was going to successfully write an AI that played backgammon proficiently.

What could I do? Maybe I could write one that learned to play by itself.

I researched suitable algorithms and finally implemented a slightly modified version of the TD-Gammon algorithm by Gerald Tesauro (which had a great Performance/Time-to-implement ratio :D ).

Some sources that I used:

- http://www.bkgm.com/articles/tesauro/tdl.html

- http://www.cs.cornell.edu/boom/2001sp/Tsinteris/gammon.htm

- https://medium.com/jim-fleming/before-alphago-there-was-td-gammon-13deff866197

I first implemented the algorithm in Python, but due to performance issues I ported my implementation to C++. I could have used one of the hardware accelerated libraries in Python but I wanted to implement the algorithm from scratch, and a compute-heavy algorithm implemented in Python usually doesn't perform so well (but definitely looks nice!).

You can find my final implementation in C++ here:

https://github.com/hbirler/backgammoncpp.

How did the algorithm perform?

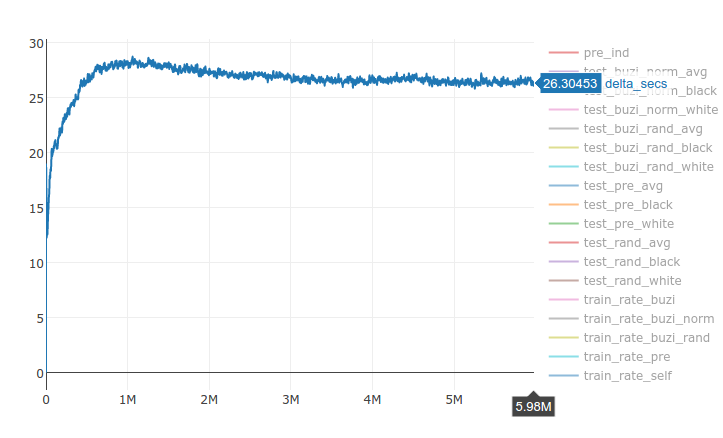

The algorithm can learn 1000 games in around 27 seconds (1000 games played against itself)

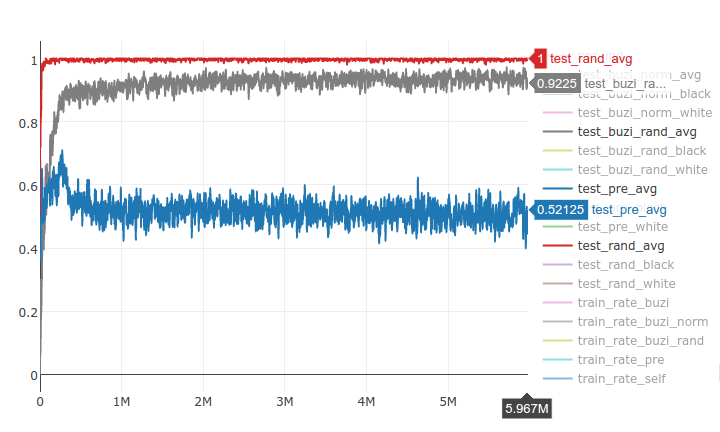

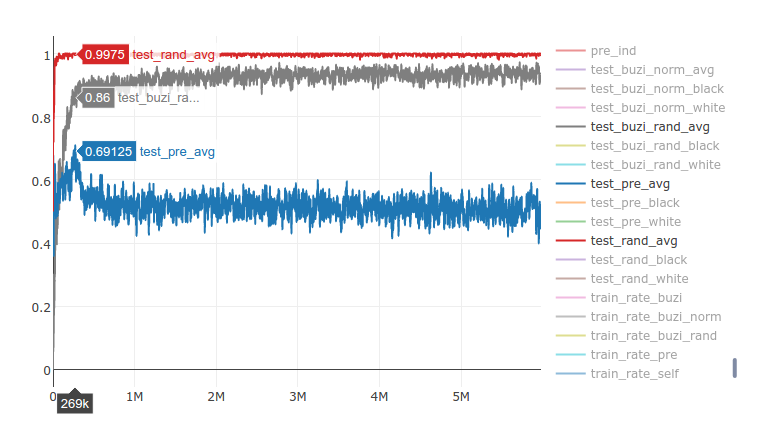

The algorithm can learn to consistently beat a randomly playing opponent pretty quickly (20k games), but takes more time to beat a badly written greedy AI (named buzi) at an acceptable rate*. I also tracked how well it performed against an older version of itself (pre) to check whether it was truly learning.

*acceptable rate: My friends started to say that the AI was an enjoyable opponent when it's test_buzi_rand was around 85%. When it got over 90% some friends started consistently losing and quit the game. But a couple of expert friends could still beat the AI at a 23 rate even after the AI had played 2M games (around 91% test_buzi_rand).

The learning rate rises until around the 300k mark and drops down significantly to around 55%. The performance doesn't improve much after 2M games.

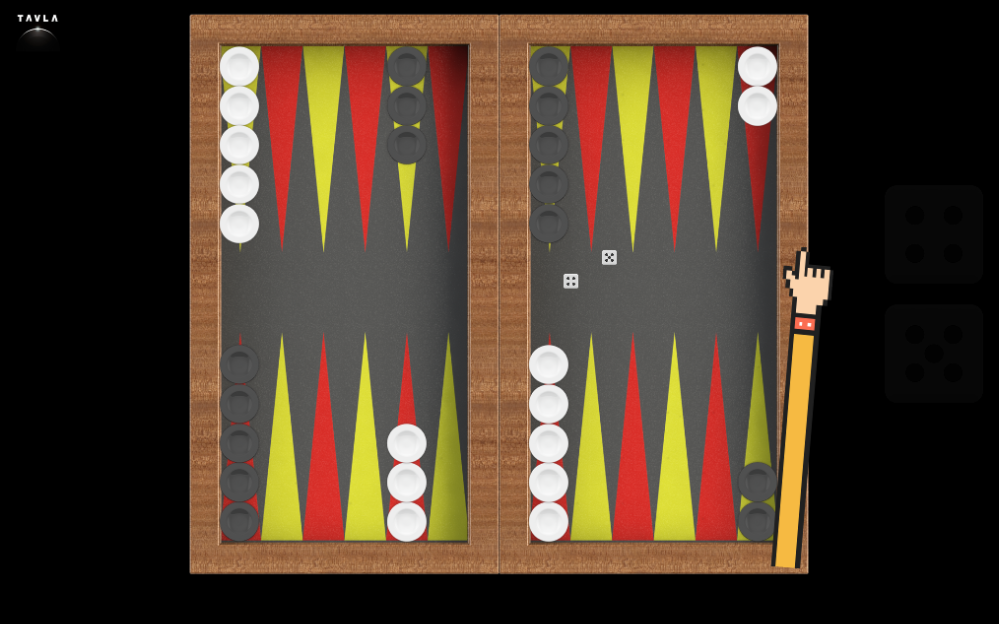

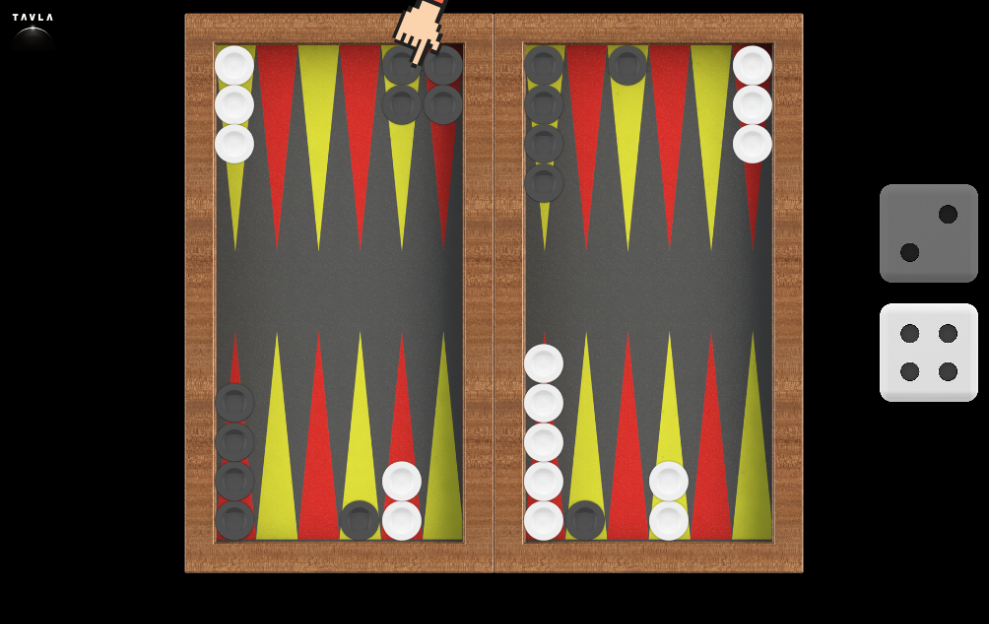

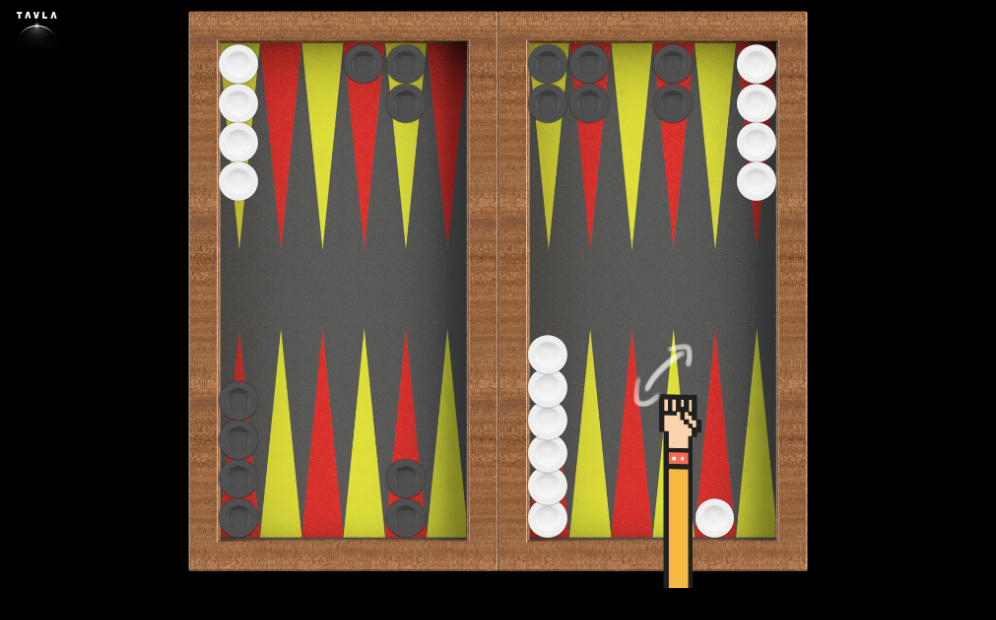

Where is the game?

I am planning to complete the game in a couple of months (maybe more?). I am writing it on HTML5/Javascript and planning to port it to mobile using Apache Cordova.

Some screenshots (things might change):